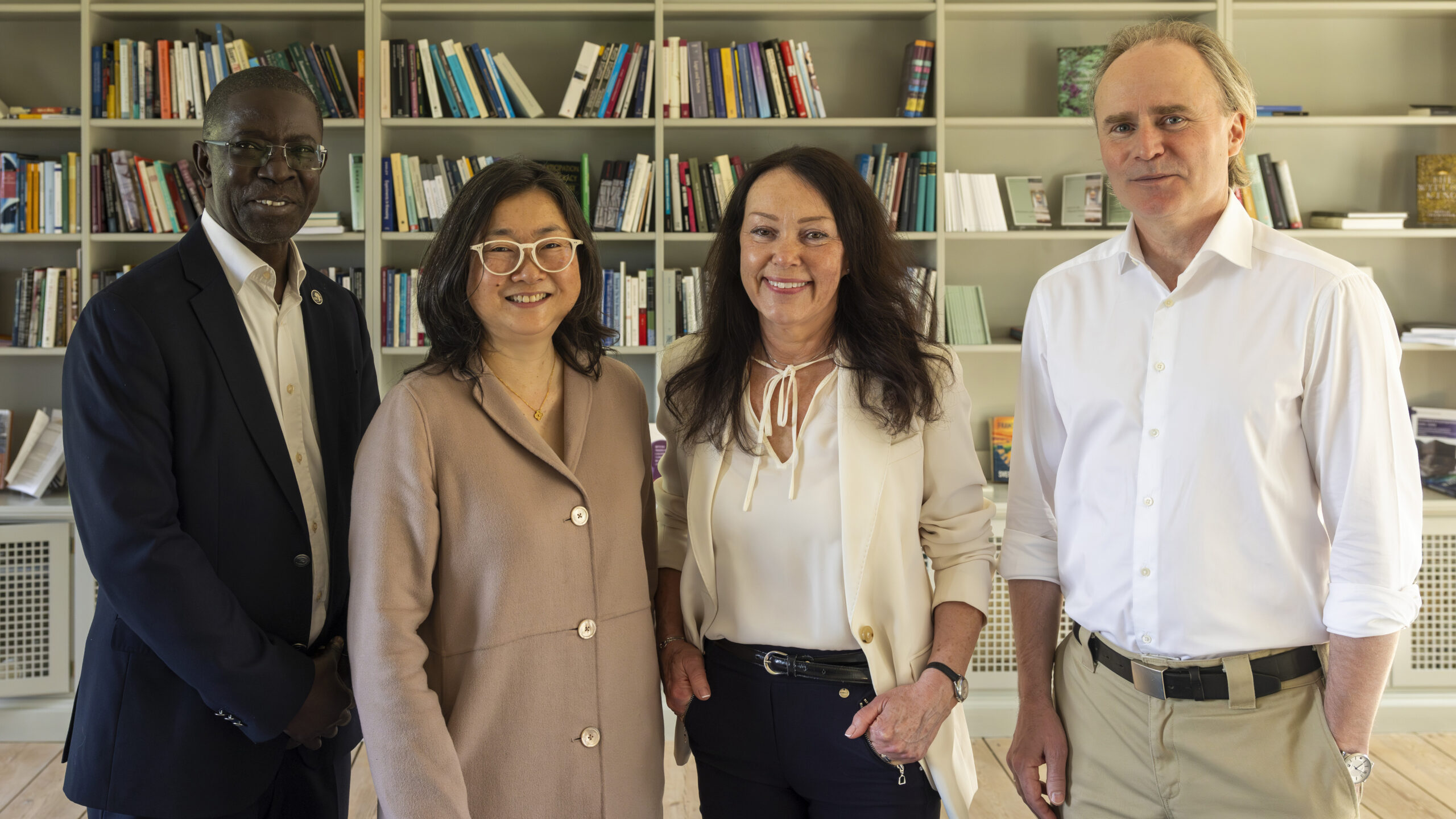

Professor Anna-Sara Lind and Associate Professor Francis Lee, both principal investigators within WASP-HS, and chairs of the annual conference AI for Humanity and Society 2022, share their reflections on the outcomes of the annual event.

We are truly in formative times when it comes to the implementation of AI. This is abundantly clear from the reflections presented here on the WASP-HS blog following the AI for Humanity and Society conference in November of 2022. Currently, we have a unique chance to shape our future with AI as it is shaped and communicated in an integrated way in society today. We can see this through rules and regulations of different sort, in organizations that emerge or already exist. The actors involved in, and affected by, the development of AI are also increasing in number and in variation. As a common denominator of it all, we can see that these developments of AI relate more and more to issues that can be analyzed from a perspective of power.

We would like to share a couple of reflections related to these questions about AI in society.

First: of special interest for the WASP-HS conference 2022 is AI and human rights in the sense that it takes an interest in how AI relates to biases, justice, equality, predictability, and transparency. However, rather than proposing algorithmic solutions to these problems the conference highlighted these questions as part of producing regulation, legitimacy, and fairness in a wider social and organizational context.

Second: AI is not one thing. This is abundantly clear from the conference interventions and the recent WASP-HS blog posts. This also means that AI will be implemented in differently in different places. Just as different kinds of electric motors are integrated in for example washrooms, kitchens, trains, scooters, and cars AI is — and will be — integrated in a multitude of settings, with a multitude of complex problems related to power, regulation, legitimacy, and fairness.

Third: If we want to create “AI for all” we need to abandon a dream of a universally yardstick for good or bad AI, What is a fair or just AI in one setting might not be a fair or just system in another setting. Thus, AI systems cannot be universally transparent, fair, or just, and the ethics of AI cannot solely focus on the programming of transparent and just systems. Sustainable AI solutions need to consider social, legal, as well as organizational and institutional dimensions of AI systems.

How do we then think about adapting systems to different societies, contexts, and organizations? How do we create responsible research and innovation that is also aware of different societies, contexts, and organizations? How can we imagine a better future with all of these different AI systems? AI need to be understood in their varied implementations and contexts and we can help do this by crossing these disciplinary and organizational boundaries.

As was clear from the conference, it is impossible to create one algorithm or one set of rules for a just AI society. Just and ethical AI is not only an issue of programming — it is a matter of adapting the electrical engines of our times to the situations where they are used. After all, the social, ethical, legal, or organizational frameworks for electric scooters might not make sense for electric mixers — or electric trains.

Therefore, we all need to create and contribute to a discussion beyond borders relating to societal sectors or academic disciplines. During the conference, we aimed to maximize the discussion and conversation as we are firmly convinced that it is the only way to create a better future with AI. We are so happy that this conference was able to reflect this multitude of perspectives. And we hope that we can continue learning from each other through this diversity.

Accordingly, we can conclude that AI has never been so topical as it is today. The use of AI is more common than it has ever been before. The use of AI thus raises many questions and issues for businesses, NGO’s, researchers and politicians. In these formative times, the possibilities, risks, and consequences of AI have never been more pressing. Today, all actors that come into contact with, create, or work with AI have different experiences of its practical realization and in the challenges that they perceive. We tried to invite many different sorts of actors from the public and private, civil society and business, NGO’s and politicians.

Moving forward, we believe we should ask questions in line of the following: Who can influence where this AI revolution is going to end up? In the various places where it will happen? Who are the constituents of an AI society? And how do we include all these different constituents in the future that we want to see with AI? What does it mean to build AI for all society? What would we like to achieve with this ongoing AI boom. And why do we want to achieve this? And whose “why’s” should we take into account in creating the future AI society? Is AI something special? Or is it just another facet of automation that has happened all through history? What can we learn from the industrial revolution? What can we learn from the advent of desktop computing? What sorts of risks can AI lead to? Is it difficult to work with AI? Does it have to be? And is the solution to potential problems binary in its character or not?

These questions highlights the importance of WASP-HS and shows why this research program is crucial for the future of our society and for humanity.